Purpose #

This entry is not a study guide for the ITIL 4 Foundation exam. It is a crosswalk between ITIL 4’s formal vocabulary and twenty years of operational experience solving the same problems ITIL was designed to name. The distinction matters. Most ITIL preparation material teaches you to pass a test. This entry assumes you have already been doing the work and need the credential to clear HR filters that were written without you in mind.

The exam itself is 40 closed-book multiple choice questions with a 65% pass threshold. For someone who has operated service delivery and coordination architecture at scale across autonomous organizational entities, the vocabulary is the only gap. This crosswalk closes that gap by anchoring ITIL 4 concepts to operational patterns you already recognize.

The Core Argument #

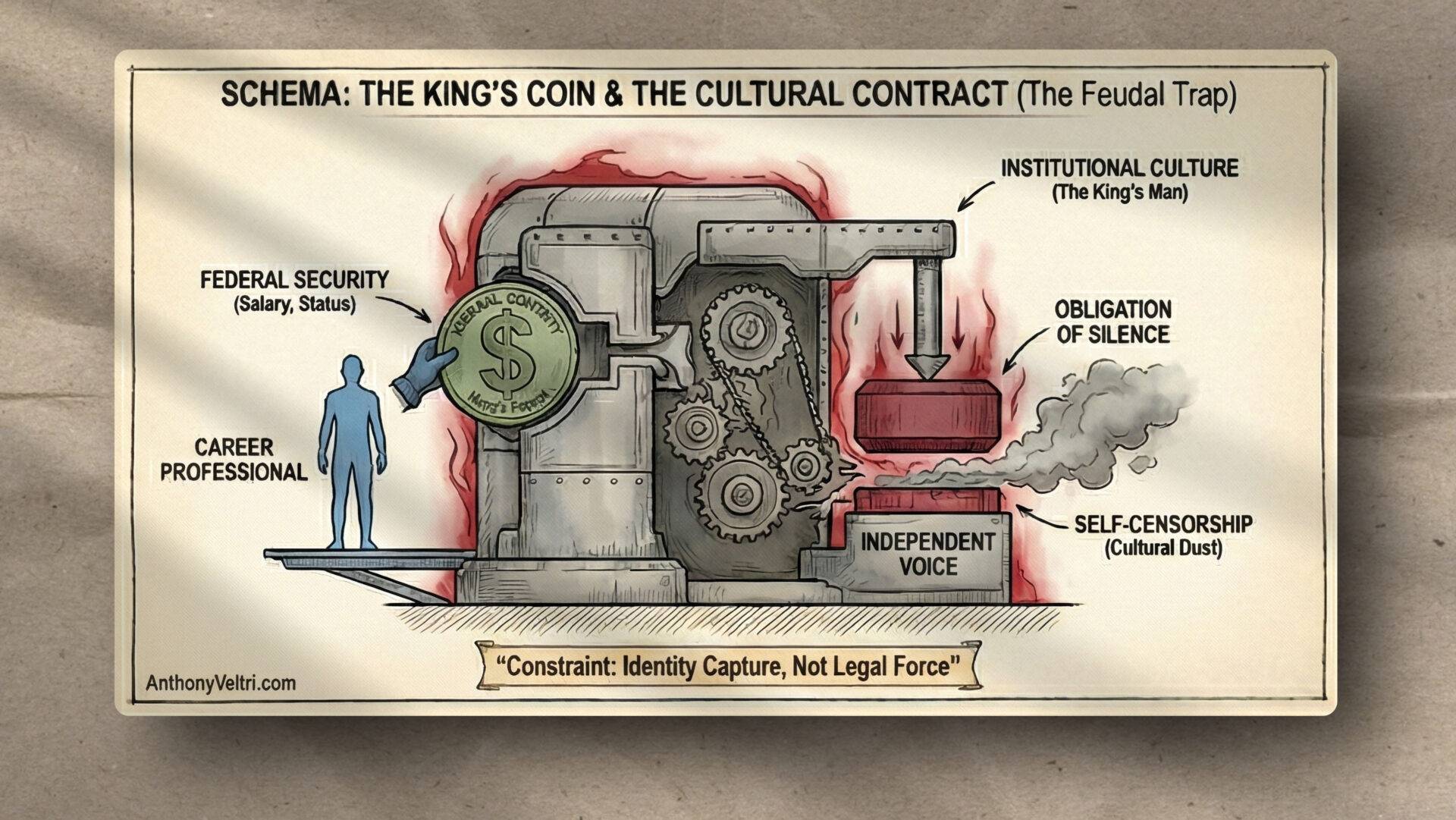

ITIL 4 is a service federation vocabulary. Its shift from v3 (process-heavy, function-centric) to ITIL 4 (value streams, guiding principles, the Service Value System) represents a maturation toward the same architectural reality that anyone who has coordinated across autonomous entities already knows: you cannot mandate compliance from partners who do not report to you. You can only design interfaces they are willing to use.

The practitioners who need ITIL most are rarely the ones studying it. They are the people who have been running service coordination for years across organizations that do not share a command structure, a schema, or sometimes even a common vocabulary for the same operational condition. ITIL 4 gives that work a name.

The Obvious Question: Didn’t ITIL 4 Already Do This? #

A sophisticated reader of this document will ask a fair question. ITIL 4 talks about partner and supplier relationships. It talks about value streams across organizational boundaries. It has guiding principles that push back on rigid process compliance. It is clearly moving toward the same architectural reality that federation doctrine describes. So did ITIL 4 beat federation doctrine to the punch?

No. And the distinction is not semantic.

ITIL 4 assumes that a service relationship exists or can be established. Even in its most evolved framing, the framework assumes you have standing to negotiate agreements, require change notification, define service levels, and establish governance structures that carry at least contractual weight. The partner and supplier dimension describes how to manage relationships with external organizations — but managing a relationship requires that a relationship exists, which means both parties have agreed to participate under some defined terms. ITIL 4 describes how to coordinate across boundaries where you have standing to make demands, even if those demands require negotiation to fulfill.

Federation doctrine addresses environments where that standing does not exist and cannot be created. The entities being coordinated are institutionally sovereign. They do not report to the coordinating function. They did not contract with it. In many cases they carry legal mandates that would prohibit subordinating their operations to any external authority, regardless of how that authority is framed. You are not managing a service relationship. You are creating the conditions under which autonomous entities will voluntarily coordinate because the alternative is operational failure that costs everyone including them. That is not a service design problem. It is a coordination architecture problem at a layer ITIL 4 has not yet reached.

The analogy: ITIL 4 is a framework for managing services inside buildings that have walls, a roof, and an address. Federation doctrine is about coordinating activity across a terrain where the buildings are sovereign and you do not own the roads between them. ITIL 4 gives you vocabulary for what happens inside any given building and for negotiating agreements between building owners. It does not give you a framework for the roads — the interfaces between sovereign entities where no one has authority and coordination either happens or it does not.

ITIL 4 is visibly moving in this direction. The shift from v3 to v4 is evidence of that movement: away from rigid process compliance, toward value streams and guiding principles, toward acknowledging that the partner and supplier dimension is where real coordination complexity lives. But that movement is happening from inside the service management tradition, expanding outward from an assumed service relationship toward the edge cases where relationships are informal or nonexistent. Federation doctrine was built from the outside in: constructed from operational experience in environments where service management frameworks had already run out of authority, and something else had to take over.

The overlap between ITIL 4 and federation doctrine is real and significant. That is why this crosswalk is worth producing. ITIL 4 vocabulary applies to substantial portions of what federation architecture practitioners do every day, and carrying that vocabulary fluently opens doors that operational competence alone does not. But the crosswalk works in both directions. ITIL 4 borrows from the problem space that federation doctrine was built to address. It has not yet fully arrived there. The credential is worth having. The doctrine is not subordinate to the framework.

The Illustrating Problem: Interface Failure Without Fault #

The clearest way to understand ITIL 4’s partner and supplier dimension is through a failure mode that traditional IT service management frameworks handle poorly: the interface break where nothing is broken.

In operational geospatial environments, a common scenario unfolds as follows. A partner organization publishes a data feed that your system ingests. The feed is reliable. Your ingest process is reliable. The interface between them is stable. Then the partner migrates their output format from shapefile to GeoJSON, which is entirely reasonable on their end. Your ingest process breaks, which is entirely reasonable on your end given what it was built to expect. No one made an error. No policy was violated. The service failed because the interface had no governance.

This is not a technology problem. It is a coordination architecture problem. ITIL 4 addresses it through the partner and supplier dimension of its Four Dimensions model, and through the practice of change enablement, which in this context means that the partner’s format migration was a change that needed notification and coordination, not just execution. The change was internal to their system. Its impact was external to their boundary. Without an interface stewardship agreement, neither side has standing to require the other to manage that impact.

The lesson is not that partners should notify you of changes. The lesson is that the service design has to account for interface volatility as a standard operating condition, not an exception. ITIL 4 calls this designing for resilience. Practitioners in federated operational environments call it not depending on things you cannot control.

Supporting Pattern: Vocabulary Collapse Under Operational Pressure #

The geospatial feed failure is clean because the format mismatch is machine-detectable. A harder version of the same problem occurs when the mismatch is semantic rather than structural, and the system continues to function while producing wrong outputs.

Wildland fire operations offer a clear example. The field assigns a danger rating of High to a given area based on integrated operational judgment. The meteorological component defines High danger as a compound condition: relative humidity below a defined threshold, wind speed above a defined threshold, and fuel moisture below a defined threshold. The field collapses those inputs into a single categorical label. The meteorological component maintains the underlying numerical values. Both representations are valid within their operational context.

The problem emerges at the interface. A coordination system that ingests both feeds will encounter two different schema expressions for the same operational reality. If it treats them as equivalent without resolving the representation difference, it is building decisions on a false semantic equality. If it treats them as incompatible, it discards valid information from one feed or the other.

ITIL 4 addresses this through information and technology as a dimension, specifically through the emphasis on shared information models and the governance of how information flows across service boundaries. The vocabulary for this in ITIL is configuration management and service catalogue management. The operational vocabulary for the same problem is schema governance. They are describing the same gap.

Supporting Pattern: Human Stakes and the Recovered Problem #

The most consequential version of schema mismatch occurs when the schema is a word rather than a format, and the stakes are human outcomes rather than data integrity.

I know this from direct experience. In 2018 I contracted West Nile virus and subsequently developed Guillain-Barre syndrome. What followed was a multi-year navigation across a fragmented service ecosystem (physicians, Department of Labor, insurers, rehabilitation specialists) in which the single word “recovered” meant something different to every actor involved, and no one held stewardship of the vocabulary across the boundaries between them.

To the Department of Labor, recovered meant functional independence: the capacity to perform activities of daily living, to dress myself, to sustain employment. To my treating physician, recovered meant maximum medical improvement (the point at which further clinical intervention was unlikely to produce measurable change). To me, recovered meant return to my pre-event baseline: the functional state I was in before the illness.

These three definitions are not wrong. Each is internally consistent within the service context that produced it. The Department of Labor definition is built around workforce participation. The clinical definition is built around treatment efficacy. My definition is built around lived experience of my own body before and after a catastrophic event. All three are legitimate. None of them are the same thing.

The problem is that I was navigating all three simultaneously, and no one in the system had standing or responsibility to reconcile them. Care coordination failed not because any individual provider was incompetent, but because the vocabulary had no steward at the boundary between services. The word recovered moved across organizational lines carrying a different semantic payload each time, and the system had no mechanism to detect or correct the mismatch.

ITIL 4 frames this as a service integration problem. The relevant practices are service desk (as the point where definitional conflicts surface) incident management when the conflict produces a care failure, and continual improvement when the pattern points to a systemic vocabulary gap. The failure I experienced was not a clinical error. It was a service design error. ITIL 4 provides a framework for locating it correctly, even if it took me years of operational pattern recognition to name it that way.

Supporting Pattern: Organizations and People — When the Metric Defeats the Mission #

The previous three patterns involve schema mismatches at technical or semantic interfaces. This one is different. It is about what happens when the organizational structure itself creates incentives that contradict the mission the organization is supposed to be serving.

Around 2010, the USDA Forest Service undertook a storage migration. Every workstation had a mapped T Drive (a network drive pointing to centralized storage managed out of a data center in Kansas City, predating real cloud infrastructure by several years). The intent was sound: centralize the authoritative record, make it discoverable, ensure continuity when personnel turned over. The T Drive was supposed to be where the golden dataset lived.

The problem was that the T Drive filled up. Nationally. And when it filled up, the response was to weight each organizational unit against its storage consumption and pressure high-use units to reduce their footprint.

Our unit was GIS. We had large datasets because GIS datasets are large. That is not a configuration problem. It is a domain characteristic. The storage allocation model was designed for policy offices and administrative functions and applied uniformly to everyone, including units whose operational output was measured in gigabytes per project.

The perverse incentive this created was predictable. We were not going to make the datasets smaller — the datasets were the work product. So we found workarounds. Local copies on portable hard drives. Reconstitution from partial archives. Informal agreements about who held the current authoritative version of what. The golden dataset, the thing the T Drive was explicitly designed to protect and centralize, migrated to whoever’s portable hard drive happened to have the most recent complete version.

Institutional memory became personally portable. That means it was fragile, undiscoverable by anyone outside the immediate team, and departure-dependent. When someone left the organization, they may have taken the golden dataset with them without anyone knowing it was gone until someone needed it.

The emails that came down during this period have stayed with me. The CIO has their top people working on it. I thought of the scene from Raiders of the Lost Ark every time. Top men. The phrase communicates accountability without conveying capability or authority to resolve the underlying structural problem. Someone owned the T Drive problem. Nobody fixed it. Those are not contradictory statements in a large federal organization, and the distance between them is exactly what ITIL’s Organizations/People dimension is designed to diagnose.

The problem was never storage. The problem was that the organizational metric (uniform storage limits applied across heterogeneous use cases) was optimized for administrative simplicity at the expense of operational function. The units that needed more storage were the units doing the most technically intensive mission work. The metric punished capability.

We eventually migrated to Box.com, which for a service badged as Pinon became the informal infrastructure that preceded formal adoption. Shadow IT becoming official infrastructure is a service transition that happened backwards. The workaround became the solution because the solution never came. ITIL 4 would call that a continual improvement failure: the pattern of workarounds was visible organizational data pointing to a structural problem that was never formally addressed.

In ITIL terms the Organizations/People dimension encompasses culture, roles, and communication structures. The T Drive failure was all three simultaneously. The culture did not have a mechanism for translating operational requirements into infrastructure allocation decisions. The role responsible for the T Drive did not have authority to redesign the allocation model. The communication structure produced email reassurances rather than structural remediation. All four dimensions of ITIL are supposed to be designed in relationship with each other. Here the organizational dimension and the information/technology dimension were designed in isolation, and the conflict played out in the field while the golden datasets lived on portable hard drives in people’s bags.

ITIL 4 Concept Map for Practitioners #

The following crosswalk is not exhaustive. It covers the concepts most likely to appear on the Foundation exam and maps each to the operational pattern it describes.

Service Value System. The overarching framework connecting governance, guiding principles, the Service Value Chain, practices, and continual improvement into a coherent whole. For practitioners who have operated across federated environments, this is the recognition that service delivery is never a single-organization problem. It always involves inputs, outputs, and interdependencies across boundaries.

Service Value Chain. The six activities (plan, improve, engage, design and transition, obtain and build, deliver and support) represent a coordination loop, not a linear process. Anyone who has run disaster response operations or multi-component program management has been operating a service value chain without the label. The label matters because it creates shared vocabulary for diagnosing where in the loop a failure originated.

Four Dimensions. Organizations and people, information and technology, partners and suppliers, value streams and processes. The partner and supplier dimension is where federated operations live. The information and technology dimension is where schema governance lives. Most operational failures that feel like technology problems are actually located in one of these two dimensions.

Guiding Principles. ITIL 4 articulates seven. The most operationally significant for practitioners coming from complex program environments are “start where you are” (do not discard working institutional knowledge in favor of theoretical ideals), “focus on value” (every activity should be traceable to an outcome that matters to someone), and “think and work holistically” (no service component operates in isolation from the system it inhabits).

Change Enablement. The practice governing how changes to services and infrastructure are introduced without unnecessary disruption. The GeoJSON migration scenario is a change enablement failure. The partner organization made a technically valid change with no coordination at the interface. Change enablement practice would have required communication, a transition period, or a versioned feed that allowed both formats simultaneously. None of those interventions require authority over the partner. They require interface stewardship agreements established before the change occurs.

Continual Improvement. The ongoing practice of identifying and implementing improvements at every level of the service organization. For practitioners, this is the distinction between a project (bounded, delivered, complete) and operational stewardship (ongoing, iterative, never finished). Most of the coordination work that produces durable outcomes operates in continual improvement mode, not project mode. ITIL 4 legitimizes that framing.

The Seven Guiding Principles: Operational Illustrations #

The guiding principles are the most heavily tested conceptual area on the Foundation exam after incident and problem management. The exam does not ask you to recite them. It presents a scenario and asks which principle it illustrates. The fastest path to reliable recognition is anchoring each principle to an operational moment rather than a definition.

Focus on value. Every activity should be traceable to an outcome that matters to a stakeholder. The T Drive’s storage allocation model failed this principle directly. Uniform limits were administratively convenient but they were not traceable to any operational outcome that mattered to the GIS unit, to the field, or to the mission. When you cannot draw a line from an activity or a policy to a stakeholder outcome, the activity or the policy is overhead. The exam question will describe something that exists because it has always existed, and ask what principle suggests evaluating whether it should continue to exist. Focus on value.

Start where you are. Assess what exists before designing what should exist. Do not discard working institutional knowledge in favor of theoretical ideals. Most of my career has been brownfield coordination — inheriting operational systems and improving them without disruption, federating existing capabilities because building new ones was not an option, and working with the organizational structures that existed because reorganizing them was outside the scope of the mission. This principle legitimizes that mode of operation. The exam question will describe an organization that wants to scrap everything and start fresh. The principle that pushes back on that instinct is this one.

Progress iteratively with feedback. Do not wait for perfect conditions. Deliver in increments and use feedback to correct course. The iCAV system that eventually became DHS GII was not designed in its final form and deployed. It grew through operational use, feedback from the field, and iterative refinement over years. The exam question will describe a choice between a big-bang implementation and an incremental one. The principle that favors the incremental approach is this one.

Collaborate and promote visibility. Involve the right people and make work visible. The visibility half of this principle is underemphasized in most preparation material and it is the operationally important half. The T Drive’s golden dataset problem was in part a visibility failure (nobody outside the immediate team could see where the authoritative version was, who had it, or when it had last been updated). Information radiators, shared status dashboards, and coordination feeds are all implementations of this principle. The exam question will describe a team working in isolation whose outputs surprise stakeholders. The principle that addresses isolation is this one.

Think and work holistically. No component operates in isolation from the system it inhabits. Changes to one part affect the whole. The Akamai power scenario illustrates this principle from a diagnostic angle — the fault was below the model’s boundary conditions, which means the diagnostic approach had to expand beyond the obvious system to find it. The GeoJSON migration illustrates it from a change management angle — the partner optimized locally and broke the system holistically without intending to. The exam question will describe a change that improved one component while degrading overall service. The principle that names that failure is this one.

Keep it simple and practical. Eliminate steps that do not contribute to value creation. This is not a license for cutting corners. It is a principle against complexity theater (processes that exist because someone thought they should, not because they produce outcomes). The storage allocation model that generated email reassurances rather than structural fixes was complexity theater. The email had the form of accountability without the function of it. The exam question will describe an elaborate process with steps that cannot be traced to outcomes. The principle that challenges unnecessary complexity is this one.

Optimize and automate. Optimize the process first, then automate it. Automating a broken process produces broken outputs faster. The exam tests this sequence specifically because the instinct in technology environments is to reach for automation before the underlying workflow is understood. If you automate the T Drive allocation model without fixing the uniform limit problem first, you have automated the perverse incentive. The exam question will describe an automation initiative that is not producing the expected improvement. The likely diagnosis involves optimizing before automating.

Knowledge Management and the DIKW Model: A Practitioner’s Implementation #

ITIL 4’s knowledge management practice is one of the most abstract in the framework. The official definition (maintain and improve the effective, efficient, and convenient use of information and knowledge across the organization) is accurate and not very useful as a study anchor. The DIKW model it references is more tractable.

DIKW stands for Data, Information, Knowledge, Wisdom. It is a hierarchy describing how raw observations become actionable judgment.

Data is a fact without context. A sensor reading. A log entry. A coordinate pair. By itself it tells you nothing about what to do.

Information is data with context. The sensor reading placed against a threshold, interpreted against a standard, associated with a time and location. Now it means something, but it is still descriptive.

Knowledge is information applied. The pattern recognition that says this combination of sensor readings has preceded a specific failure mode seventeen times in the last three years, so the probability of that failure mode is elevated. Knowledge requires experience. It is the difference between reading a map and navigating terrain.

Wisdom is knowing which knowledge to apply when. This is the senior practitioner’s contribution. The junior analyst has data. The experienced operator has knowledge. The domain expert knows which knowledge is relevant to this specific situation and which should be set aside. Wisdom is judgment about knowledge, not just its possession.

I have spent the better part of a decade building a documentation infrastructure that is a direct implementation of this model at organizational scale. Field notes capture data and information, (meaning specific operational moments with enough context to be legible to someone who was not present). Doctrine entries synthesize field notes into knowledge (Meaning principles that apply across situations, patterns that recur across domains). The bidirectional reference structure between them is an explicit attempt to preserve the traceability from principle back to the operational data that generated it.

The RS-CAT framework: Retrieval, Sequencing, Compression, Abstraction, Teachability, is essentially a DIKW processing methodology applied to lived experience. Retrieval surfaces the raw operational data. Sequencing provides the information context (what happened before, what came after, what the situation required). Compression eliminates what does not contribute to the transferable insight. Abstraction moves from the specific case to the generalizable principle, which is the transition from information to knowledge. Teachability is the test of whether the knowledge has been rendered legible enough to transfer, which requires wisdom about what the audience needs to understand it.

ITIL 4 would recognize all of this as knowledge management practice. The exam will not ask about RS-CAT. It will ask which practice is responsible for ensuring that information is available when and where it is needed, and that institutional knowledge is not lost when personnel turn over. The answer is knowledge management. The T Drive golden dataset failure was also a knowledge management failure (meaning the authoritative record was undiscoverable and turnover/departure-dependent because the knowledge management infrastructure was subordinated to the storage allocation metric.)

On Certification Strategy #

The ITIL 4 Foundation exam tests vocabulary recognition, not operational judgment. The pass threshold of 65% is achievable by anyone who understands the concepts as operational reality rather than memorized definitions. The recommended approach is to read the official AXELOS Foundation guide once for vocabulary acquisition, take two to three practice exams to identify any gaps in terminology, and trust operational pattern recognition to carry the rest.

The more important output of this preparation is not the credential. It is the ability to use ITIL 4 vocabulary fluently in service design conversations, procurement contexts, and governance discussions where that vocabulary is the expected lingua franca. The credential clears the HR filter. The vocabulary enables the work.

The Vocabulary Collision: Problem, Decision, or Capability Gap #

Why This Section Exists #

The most common failure mode for experienced operators taking the ITIL Foundation exam is not ignorance of the underlying concepts. It is arriving with a more precise vocabulary than the framework uses, and losing points because the answer that reflects deeper understanding does not match the answer the question was written to receive.

The incident versus problem distinction is the clearest example of this collision. It is also the entry point into a broader taxonomy that ITIL does not fully articulate but that any serious operational practitioner has developed through necessity.

The Operational Taxonomy #

In rigorous operational practice, a problem requires three conditions to be simultaneously true.

First, deviation from a standard. You were able to perform this function before. A baseline existed. Performance has degraded from that baseline. Without a prior baseline, there is no deviation, and therefore no problem in any analytically useful sense.

Second, cause unknown. If you know why the deviation is occurring, the analytical phase is closed. You no longer have a problem. You have a decision.

Third, mission significance. If the deviation does not affect outcomes that matter, it does not enter the problem queue. The significance filter is not laziness. It is the recognition that unlimited troubleshooting resources do not exist and triage is a professional obligation.

When all three conditions are present, problem management is the correct response: identify root cause, document findings, implement or recommend remediation.

When any one condition is absent, a different classification applies, and a different operational response is required.

The Collapse Logic #

If cause is known: it is a Decision.

The analytical work is done. Someone needs to act, defer, or accept the known condition as managed risk. Keeping this inside the problem management queue ties up diagnostic resources on work that is already complete. The correct handoff is to whoever owns the remediation decision — facilities, budget authority, program management — with a clear problem closure record and a documented recommendation.

ITIL calls this condition a “known error” and manages it inside problem management practice. That classification is defensible as an administrative category. As an operational workflow, it creates ambiguity about when problem management’s work is actually finished. In a high-tempo environment, that ambiguity is a coordination cost.

If mission significance is absent: it is Noise.

Not every deviation from a standard requires resolution. Filtering noise from signal is itself an operational competency. A system that treats all deviations as problems produces a problem queue that cannot be prioritized and a team that cannot distinguish what matters from what does not. ITIL’s problem management practice does not provide a significance filter. Practitioners have to build one themselves or inherit organizational chaos.

If no baseline exists: it is a Capability Gap.

This is the most consequential misclassification because it produces a specific and recognizable failure mode. A team assigned to troubleshoot a capability gap will search for a root cause that does not exist, because there was never a prior state to return to. The correct response is not problem management. It is capability development: design, resource allocation, build, and validation against a new standard that did not previously exist.

You cannot troubleshoot your way to a capability that was never built.

The Akamai Scenario: Below the Model’s Boundary #

In 2008, at an emergency relocation site, a set of Akamai appliances — each weighing approximately six thousand pounds due to iron hard drive arrays — were exhibiting intermittent degraded performance after boot. The symptom profile suggested a software or configuration issue. Troubleshooting proceeded at the log level, which is to say inside the OSI model, where the tools and methodologies for network service diagnosis live.

The troubleshooting produced no actionable findings because the fault was not located in any of the model’s seven layers.

Physical inspection and a digital multimeter identified the actual cause: inadequate grounding on the 220-volt feed serving the power center in the older building. The appliances were receiving power, passing basic electrical checks, and initializing normally. The grounding deficiency was producing effects that appeared at the application layer as intermittent anomalies with no consistent log signature.

The fault was at what you might call layer zero: physical plant infrastructure below the boundary conditions the OSI model is designed to represent.

By the operational taxonomy above, once the grounding deficiency was identified, the problem was closed. A known cause existed. The classification changed immediately from problem to decision: repair the electrical infrastructure, replace the appliances, or document the condition as a managed risk with operational constraints. The decision belonged to whoever owned the facility and the budget. The diagnostic work was done.

In ITIL terms, this would be a “known error” record awaiting a change to implement the fix. The vocabulary is not wrong. It is just less precise about the transition point between analytical work and decision authority. In a complex operational environment, that imprecision has a coordination cost.

The deeper lesson from this scenario is methodological. The OSI model is a powerful diagnostic tool within its domain. Expert pattern recognition includes knowing when symptoms do not fit any layer in the model, which means the fault is outside the model’s boundary conditions entirely. That recognition is not a failure of the model. It is a competency the model cannot teach.

The Exam Translation #

For Foundation exam purposes, the vocabulary that matters is ITIL’s, not the more precise operational taxonomy. The translation table is as follows.

ITIL’s “incident” is any unplanned interruption or degradation of service. Restore normal operation as fast as possible. Root cause analysis is explicitly secondary and belongs to a different practice. On exam questions, if the scenario describes something broken that needs to be fixed quickly, the answer is incident management.

ITIL’s “problem” is the cause, or potential cause, of one or more incidents. The goal of problem management is root cause identification, not service restoration. On exam questions, if the scenario describes investigating why something keeps breaking, the answer is problem management.

ITIL’s “known error” is a problem where root cause has been identified but a permanent fix has not yet been implemented. A workaround may exist. On exam questions, if the scenario describes a condition where the cause is known and a workaround is in place pending a permanent fix, the answer involves known error records within problem management.

The important thing to hold onto is this: ITIL’s definitions are not wrong. They are a coarser-grained version of a more precise taxonomy. You can always translate down to a coarser vocabulary once you understand the underlying structure. What you cannot do is answer exam questions in a richer vocabulary than the question was written to receive. Know both. Use the right one in the right context.

The Broader Pattern: Vocabulary Imposition and Its Costs #

This vocabulary collision is not unique to ITIL and the operational problem taxonomy. It is the same failure mode as the “recovered” schema mismatch in patient coordination, the fire danger rating collapse between field and meteorological components, and the GeoJSON feed break between partner organizations. In every case, the underlying operational reality is the same. The representations differ. The interfaces fail.

The difference in the credentialing context is that the vocabulary imposition is intentional and encoded into a test. The framework’s authors defined their terms inside one professional community and then used those definitions as universal gatekeeping criteria. A practitioner arriving with a richer vocabulary from a different professional domain fails not because they know less, but because they translated into a dialect the test cannot recognize.

Acknowledging this is not a complaint. It is a diagnostic. The correct response is the same one that works for every schema mismatch: know both vocabularies, know when each applies, and build the translation layer explicitly rather than assuming it will happen on its own.

That translation layer is what this document is.

Related Entries #

- Federation Architecture: Why Integration Fails and Federation Works

- Interface Stewardship: Governance Without Authority

- Schema Governance: When the Data Is Correct and the Meaning Is Wrong

- Continual Improvement vs. Project Completion: A Practitioner’s Distinction

From Stories to Exam: The Pattern Map #

The scenarios in this document are not decorative. Each one is an anchor for one or more exam concepts. This section makes those connections explicit so you can use the stories as retrieval cues during the exam. When you read a question and the scenario feels familiar, you have already done the conceptual work. Trust the pattern and translate into ITIL’s vocabulary.

The GeoJSON feed break is your anchor for: Partners/Suppliers dimension, Change Enablement practice, the guiding principle “think and work holistically,” and the difference between a normal change (what it should have been) and an uncoordinated deployment (what it was). Any exam question describing a partner action that caused downstream service failure without anyone making an error belongs here.

The fire danger rating collapse is your anchor for: Information/Technology dimension, service configuration management, monitoring and event management, and schema governance as an information practice problem. Any exam question describing two systems producing incompatible representations of the same operational reality belongs here.

The recovered schema mismatch is your anchor for: service integration, service desk as the boundary where definitional conflicts surface, incident management when the conflict produces a care failure, and continual improvement when the pattern recurs. Any exam question describing a word or concept that means different things to different stakeholders, with care or coordination consequences, belongs here.

The T Drive and golden dataset failure is your anchor for: Organizations/People dimension, knowledge management practice, the guiding principle “focus on value,” and the failure mode of optimizing a metric at the expense of a mission. Any exam question describing perverse incentives, workarounds that became permanent, shadow IT, or departure-dependent institutional memory belongs here.

The Akamai power scenario is your anchor for: the incident versus problem distinction, the guiding principle “think and work holistically,” problem management’s root cause analysis phase, and the transition from problem to known error to decision. Any exam question describing a troubleshooting effort that is looking in the wrong place, or a root cause that falls outside the obvious diagnostic model, belongs here.

The RS-CAT documentation infrastructure is your anchor for: knowledge management practice, the DIKW model, continual improvement as an ongoing practice rather than a project, and service catalogue management as the practice of making knowledge discoverable. Any exam question describing how organizations ensure institutional knowledge survives personnel turnover belongs here.

The exam will present these patterns in unfamiliar surface forms — different industries, different technology stacks, different organizational contexts. The underlying structures are the same ones you have been navigating for twenty years. Read for the structure, not the surface. Name the ITIL practice that addresses it. Answer in their vocabulary.

Exam Quick Reference: ITIL Vocabulary vs. Operational Translation #

This section is the night-before review. Two columns: what ITIL says, and what you already know it as. When the exam uses their term, recognize your concept. Answer in their dialect.

| ITIL Term | ITIL Definition (exam language) | Operational Translation |

|---|---|---|

| Incident | Any unplanned interruption or quality reduction of a service | Something is broken. Restore it. Root cause is not your problem right now. |

| Problem | Cause or potential cause of one or more incidents | Why does this keep breaking? Investigation mode. Service may already be restored. |

| Known Error | A problem with identified root cause, permanent fix not yet implemented | You know why. A workaround exists. Fix is pending. By your taxonomy: a Decision waiting on resources or authorization. |

| Event | Any change of state that has significance for service management | Something happened that the system noticed. Informational, warning, or exception. |

| Change | Adding, modifying, or removing anything that could affect services | The GeoJSON migration. Should have been a normal change with partner notification. |

| Standard Change | Low-risk, pre-authorized, routine change with established procedure | Rote. No approval needed. Do it. |

| Normal Change | Assessed, authorized, scheduled through change process | Most changes. Requires review and approval before execution. |

| Emergency Change | Must be implemented immediately; expedited authorization | Fire is happening now. Cut the process short but document after. |

| Service | A means of enabling value co-creation by facilitating outcomes customers want, without managing specific costs and risks | What you provide so someone else can do their mission without owning the underlying complexity. |

| Value | Perceived benefits, usefulness, and importance of something to a stakeholder | Always defined by the receiver. Not by what you built or delivered. |

| Outcome | Result for a stakeholder enabled by outputs | What changed for someone because of what you delivered. Not the deliverable itself. |

| Output | Tangible or intangible deliverable of an activity | The thing you produced. Not the same as whether it mattered. |

| Utility | Fit for purpose — does it do what it is supposed to do | It works when conditions are right. |

| Warranty | Fit for use — available, reliable, secure, with sufficient capacity | It works consistently enough to depend on. |

| Risk | Possible event that could cause harm or loss | Probability times impact. ITIL treats it as input to every practice decision. |

| Service Offering | Specific mix of goods, access to resources, and service actions for a defined consumer | What is actually on the table. Not what the consumer assumed was on the table. |

| SLA | Service Level Agreement — documented targets between provider and customer | External commitment. What you promised them. |

| OLA | Operational Level Agreement — internal agreement supporting SLA delivery | Internal commitment. What your teams promised each other to make the SLA possible. |

| Underpinning Contract | Agreement with external supplier supporting service delivery | What a vendor promised you. Sits below the OLA. |

| CMDB | Configuration Management Database — record of configuration items and their relationships | The authoritative map of what exists and how it connects. Start here before you change anything. |

| Configuration Item (CI) | Any component that needs to be managed to deliver a service | Anything in the CMDB. Hardware, software, documentation, people in some models. |

| Service Catalogue | Visible, approved services available to customers | What you actually offer. Not what you used to offer. Not what someone thinks you offer. |

| Continual Improvement | Ongoing activity to improve products, services, and practices | Not a project. Never done. Feeds every other practice. |

| DIKW Model | Data, Information, Knowledge, Wisdom — hierarchy of how raw data becomes actionable insight | Data is a fact. Information is data in context. Knowledge is information applied. Wisdom is knowing which knowledge to use when. |

| Service Desk | Single point of contact between provider and users for all service requests and incidents | Not just a team. A practice. Can be virtualized, distributed, or AI-assisted. |

| Service Request | Formal request for something predefined — access, information, standard change | Not an incident. Nothing is broken. Someone wants something that is already available. |

| Release | Making a new or changed service available for use | The decision that something is ready. Separate from the act of deploying it. |

| Deployment | Moving new or changed components to the live environment | The technical act. Separate from the decision that it is ready. |

| Four Dimensions | Organizations/People, Information/Technology, Partners/Suppliers, Value Streams/Processes | Every service design decision has to account for all four. Optimizing one at the expense of another produces systemic failure. |

| Guiding Principles | Focus on value; Start where you are; Progress iteratively with feedback; Collaborate and promote visibility; Think and work holistically; Keep it simple and practical; Optimize and automate | Seven principles, not rules. Applied using judgment. The exam tests recognition of which principle a scenario illustrates. |

| Service Value System (SVS) | Overarching framework: governance, guiding principles, Service Value Chain, practices, continual improvement | The whole picture. How all the parts connect into a functioning service organization. |

| Service Value Chain (SVC) | Six activities: Plan, Improve, Engage, Design/Transition, Obtain/Build, Deliver/Support | A coordination loop, not a sequence. All six are active simultaneously. Failure in one degrades the whole. |

The Three Exam Questions You Will See Repeatedly #

Incident or problem? If someone is trying to restore service: incident. If someone is trying to find out why it happened: problem. These are different practices with different goals and different teams in mature organizations.

Which guiding principle? Read for the operational emphasis in the scenario. Reusing existing tools rather than buying new ones is “start where you are.” Building incrementally and checking results is “progress iteratively with feedback.” Removing steps that add no value is “keep it simple and practical.”

Which change type? The key distinction is not risk level alone — it is authorization timing. A standard change is pre-authorized as a class. Someone assessed this type of change once, approved it as a standing procedure, and every subsequent instance inherits that authorization. You execute and document, you do not ask permission each time. A normal change requires per-instance authorization — someone reviews this specific change, assesses its risk and impact, and approves it before it proceeds. An emergency change also requires per-instance authorization but the process is compressed, happening concurrently with or immediately after execution rather than before.

A note on why this particular “change type” distinction trips people

Standard and normal are functional synonyms in operational English. A standard operating procedure is a normal operating procedure. Standard practice is normal practice. Your entire professional vocabulary before encountering ITIL has trained you to treat these words as interchangeable, which means ITIL is asking you to hold a technical distinction between two terms your career has spent years collapsing into one.

The underlying distinction is not about the words. It is about authorization timing and scope. A standard (likely from standardized) change carries pre-authorization at the class level: someone assessed this type of change once, approved it as a standing procedure, and every subsequent instance inherits that authorization. You execute and document. You do not ask permission each time. A normal change requires per-instance authorization: someone reviews this specific change before it proceeds. The word “normal” here likely means normative process, the default path through full review, not ordinary or routine in the everyday sense.

<Pedant grumble incoming> ITIL could have named these “pre-authorized” and “reviewed” and the distinction would have been self-evident. It did not, and the vocabulary is now encoded into study guides, exam questions, and organizational ITSM implementations at sufficient scale that it will not change. The switching cost exceeds the readability benefit at this point.

What this means practically is that you cannot trust your instincts on these two terms. “Standard” feels like it should mean ordinary and unremarkable. “Normal” feels like it should mean the same thing. Both feelings are wrong in ITIL’s usage. Anchor to authorization timing, not to the words themselves.

Pre-authorized as a class: standard.

Authorized per instance before execution: normal.

Authorized per instance during or after execution because the building is metaphorically on fire: emergency.

The practical test: if you have done this exact thing before under an approved procedure and nothing about this instance is different, it is standard. If someone needs to look at this specific change and say yes before you proceed, it is normal. If the building is on fire, it is emergency.

That last test is worth holding onto. Not because it is clever, but because it is the kind of operational shorthand that experienced practitioners develop when they have absorbed a framework deeply enough to stop reciting it. The goal of this entire document is to get you to that point before the exam, not after.

The credential itself is narrow. Forty questions, 65 percent threshold, closed book. For anyone who has coordinated service delivery across autonomous organizational entities under real operational pressure, the pass is not the hard part. The vocabulary translation is the hard part, and it is hard in a specific direction: not from ignorance toward knowledge, but from a richer operational vocabulary toward a coarser institutional one. You have to know both registers and know when to use each. The exam requires one. The work requires the other.

This is not the first time I have built a crosswalk of this kind. The PMP, PMI-ACP, and PMI-PBA are each multi-hour proctored exams with serious preparation requirements. For each one, the same pattern held: I had been doing the work for years before I studied for the credential, and the credential’s vocabulary was a translation problem, not a knowledge problem. The crosswalk was the preparation. That practice is documented in the Wrong Tools field note for the PMI framework specifically, where the argument is that project management credentials are genuinely valuable and structurally insufficient for federated coordination work, for reasons PMI has published but the exam prep pipeline does not transmit. The same logic applies here. The ITIL 4 credential is worth having. It describes real problems with real vocabulary. It does not describe all of the problems, and knowing where its vocabulary runs out is part of carrying it well.

What ITIL 4 gives you is standing. When you walk into a service design conversation, a procurement review, or a governance board using ITIL vocabulary, you are speaking a language that has been encoded into job descriptions, contract requirements, and HR screening filters. That standing is real and worth having. The credential is not theater. It is infrastructure.

What ITIL 4 does not give you is a framework for environments where service relationships cannot be established, where partners do not report to the coordinating function, and where coordination either happens because autonomous entities find it in their interest or it does not happen at all. ITIL 4 is visibly moving toward that terrain. The shift from v3 to v4 is evidence of that movement. It has not arrived. The gap between where ITIL 4’s authority ends and where operational coordination must continue is exactly where federation doctrine lives.

This is not a criticism of ITIL 4. It is a map of two complementary tools. Use ITIL 4 vocabulary to describe what you are doing inside organizational boundaries, across service relationships, and in governance contexts where that vocabulary is expected. Use federation doctrine to address what happens at the boundary conditions ITIL 4 was not designed to reach. The practitioners who carry both fluently are the ones who can operate inside institutional structures without being limited by them.

You have been doing the work. Now you have the vocabulary. Pass the exam and then put it in the right place in your toolkit: useful, credentialing infrastructure for a capability that was already there.

Last Updated on March 20, 2026