The Disconnected Oracle: Local Inference Patterns for DDIL

The Observation: Intelligence Without the Pipe

We currently treat Artificial Intelligence as if it were a utility, like water or electricity. We assume the “brain” lives in a data center in Virginia and that our job is simply to open the tap (the API) to let the intelligence flow to the edge.

This is a luxury belief. It relies on a premise that does not exist in the real world: infinite, unbroken connectivity.

In a DDIL (Denied, Disrupted, Intermittent, Limited) environment, the cloud is not a resource. It is a liability. If your decision support system goes dark because a backhoe hit a fiber line, or because a jamming signal saturated the spectrum, you do not have a system. You have a dependency.

The current rush to deploy “AI everywhere” misses a boring, physical truth. The smartest model in the world is useless if it lives behind a 404 or 500 error. To build resilient systems, we must stop treating the edge like a smaller version of the cloud. We have to treat it like a different planet with its own physics.

The Bridge: We Have Been Here Before (2006)

This is not a new problem. We simply have new vocabulary for it.

In 2005 and 2006, the industry was not trying to run Large Language Models at the edge. We were trying to run heavy GIS (Geographic Information Systems) servers and serve map tiles to response teams. The technology was different, but the constraints were identical.

I built a Mobile Mapping Unit inside a 26-foot box truck to serve the Rhode Island Urban Search and Rescue task force. We packed it with servers, plotters, and cooling units. We drove it into disaster zones.

Field Notes: What The Mobile Mapping Unit Taught Me About Forward-Deployed Systems

I detailed the mechanics of that truck in a previous field note (What The Mobile Mapping Unit Taught Me…). The primary lesson was not about software architecture. The lesson was that “Server Room Temperature” is a myth in the field. We fought heat. We fought vibration. We fought dirty power that choked the UPS units.

Today, when I set up a local LLM on surplus hardware, I am not looking at the token count. I am looking at the wattage. If you cannot power the GPU with a generator that is sputtering on bad fuel, your inference speed is zero. The “Disconnected Oracle” cannot be a fragile thoroughbred. It must be a mule.

The Pattern: Two Rules for Offline Intelligence

When you sever the connection to the internet, you lose two things we take for granted: the ability to “Google it” to check facts, and the infinite compute required to be polite. This dictates two strict design patterns for local AI.

1. The Immutable Reference (Solving the Drift)

In a connected world, we solve data conflicts by checking the central database (the “Single Source of Truth”). In a disconnected world, that source does not exist.

Field Guide: Disconnected Editing Is Not a GIS Feature. It Is a Survival Pattern.

In my GIS work, we learned that “disconnected editing” is dangerous. (See: Disconnected Editing Is Not a GIS Feature). If two operators edit the same map while offline, who wins when they reconnect? The merge conflict can destroy the data integrity.

The same logic applies to Edge AI. You cannot allow a local model to “learn” or hallucinate freely without a guardrail. You need a “Reference Implementation” stored locally. This is a static vector store (a library of PDFs, Field Manuals, or repair guides) that the model can read but cannot alter. The model becomes a retrieval interface, not a creative writer. It must cite the local document or refuse to answer. We do not need creativity at the edge. We need retrieval.

2. Cognitive Load and the “Terse” Interface

The second constraint is the user. An operator in a DDIL environment is usually cold, tired, or scared. They do not want to have a “chat.” They want a coordinate. They want a torque setting.

Flying The Picture: What A Little Cessna Taught Me About Mission Systems

I wrote about the difference between “flying the plane” and “managing the mission” in a previous note (Flying The Picture). In a Cessna, you do not have hands free to prompt engineer a chatbot. The interface needs to be terse. It needs to be “Answer-First.”

If the system forces the user to be polite, or if it buries the answer in three paragraphs of “I hope this helps” conversational filler, the system is dangerous. The Disconnected Oracle is not a conversationalist. It is a retrieval instrument. It should feel like a command line, not a concierge.

The Directive: Audit the Dependency

If you are building for the edge today, stop buying cloud credits and start buying “Surplus.”

We need to stop training models to be encyclopedias and start training them to be field manuals. This requires a shift in how we validate capability:

- Pull the Plug. Literally unplug the ethernet cable. Does the system still work? If no, it is not “Edge Ready.” It is just a cached web page.

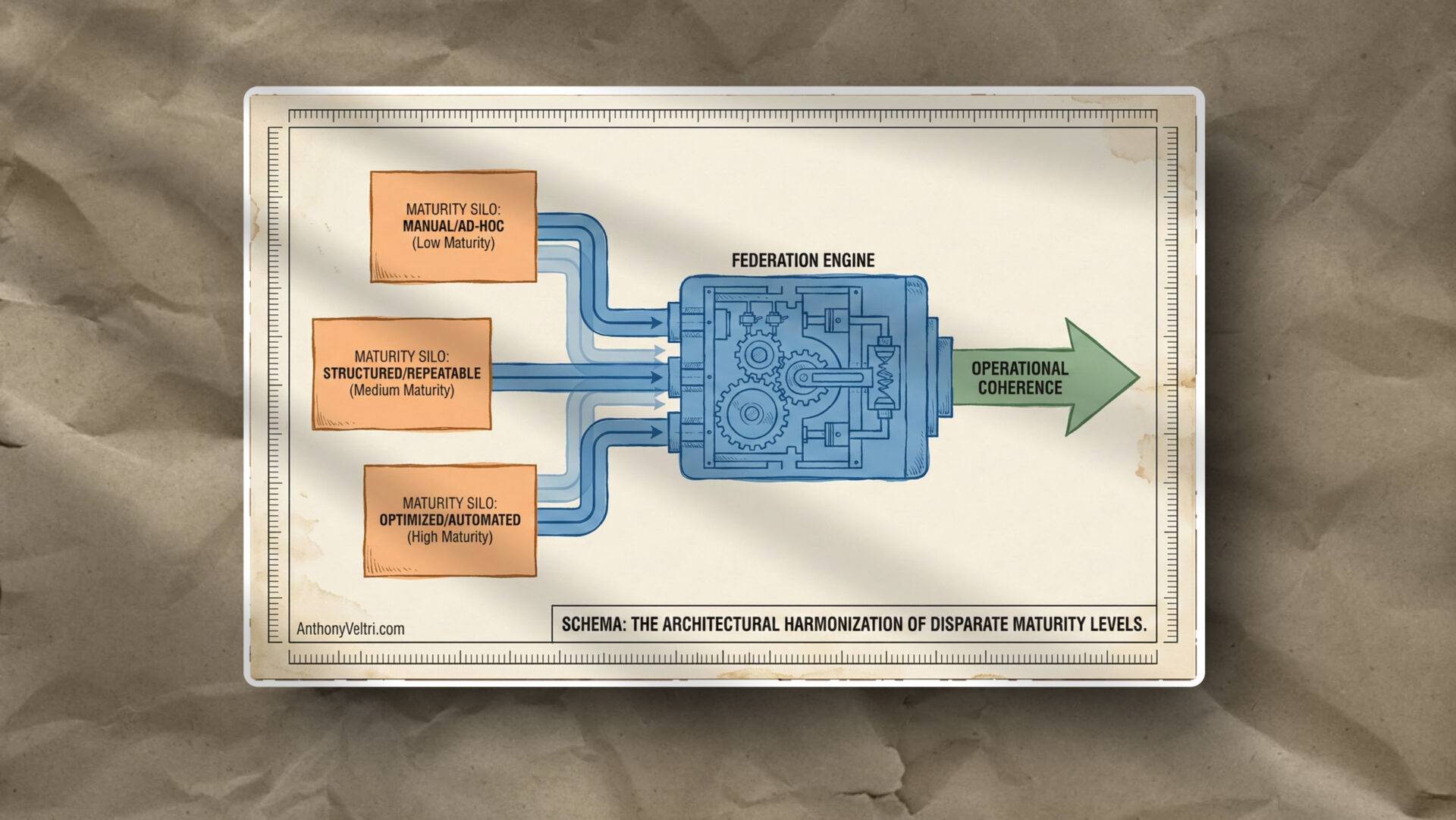

- Compress the Context. You do not need the whole internet. You need the “Mission Corpus.” (This aligns with the architecture of my operational federation dead reckoning project).

- Respect the Hardware. A rack in a clean room is theory. A laptop in a humid tent is practice.

The future of AI at the edge is not about who has the biggest model. It is about who can run the most useful model on a battery that is running out of juice.

Last Updated on May 2, 2026