No Amount of Federation Saves a Broken Anchor Point

This piece is part of a three-part series on ground truth, federation, and the anchor point. Read the series guide for context on how the three pieces fit together.

There is a distinction that does not get made often enough in conversations about data architecture.

Data is useful. Data is not always golden. And that is okay.

The failure mode is not treating non-golden data as useful. The failure mode is treating useful data as golden when it is not, then building hard operational decisions on top of it without realizing the difference.

I learned this across several domains, sometimes by doing it right and sometimes by watching what happened when it went wrong.

What the Sensor Sees Before You Do

Remote sensing platforms share one characteristic across every domain I have worked in. They reveal signals before those signals are perceptible to the human eye.

Beetle-killed timber shows spectral stress signatures weeks before the needles visibly redden. A crop field treated with differential fertilizer concentrations shows reflectance variation before a scout walking the rows notices anything wrong. Compressed grass from human foot traffic shows textural change in airborne imagery before the path is visible at ground level.

At DHS I was working with airborne collection across a range of platforms and sensors. Some satellite-derived. Some manned aircraft. Some, in contexts I will not detail here, considerably more capable than either. One of the more memorable demonstrations I attended involved change detection applied to security perimeters. Specifically, detecting human traversal across different ground cover types using spectral and textural analysis of airborne imagery.

The physics was identical to agricultural change detection. The same convolution kernels that detect differential crop stress from varying fertilizer application can detect compressed grass from foot traffic. The same principles I had applied in natural resources work, studying fire-damaged timber, beetle kill, and soil disturbance, appeared again in an entirely different operational context. The sensor does not care about the application. It sees what it sees.

What changes is the question you are asking of the data. And the question determines what it means for that data to be trustworthy enough to act on.

In the agricultural case a false positive costs money. You send a crew to inspect a field that turns out to be fine. In the security perimeter case the consequences of false positives and false negatives are different in kind, not just in degree.

The analysts working with those detection outputs were not reading correlation coefficients. They were not imputing values from adjacent pixels. But the models that trained those algorithms certainly were. Someone had walked the fields, logged the footwear types, noted the ground conditions, and counted the hours since traversal. That ground truth work was invisible by the time it reached the operational layer. But it was the reason the output could be trusted at all.

The anchor point existed. It was just buried under everything built on top of it.

Situational Awareness Is Not a Golden Dataset. That Is Fine.

We also did substantial oblique collection, particularly around critical infrastructure and pre-event site preparation. Oblique imagery of urban environments, facility approaches, and infrastructure corridors.

That imagery was not validated against ground control points the way a wildland fire fuel study would be. It was not checked for geometric accuracy with the rigor you would apply before calculating standoff distances or sightline maps.

And that was the right call.

Because that imagery was being used for situational awareness. What does this generally look like? Where are the major access points? How does this compare to the last collection? For those questions, precise geometric calibration is not the contract. Timeliness and coverage are. The imagery was useful. It was not golden. Nobody needed to rivet their name to it because no decision with hard irreversible consequences depended on it being precisely right.

The moment that changes, the moment you need to calculate a sightline, establish a standoff radius, or determine whether a structure falls within a defined perimeter, you need ground control points. You need validation. You need an accountable human name attached to the output. You need the Mike E. plate on the turbine.

The question determines the required quality of the answer. This is not a failure of the useful data. It is a clarity of purpose.

The Senior Leadership Problem Is a Governance Problem

The most common misconception I encountered briefing senior executives on collection capabilities was a version of the same thing.

“Why can we not just get a picture of this.”

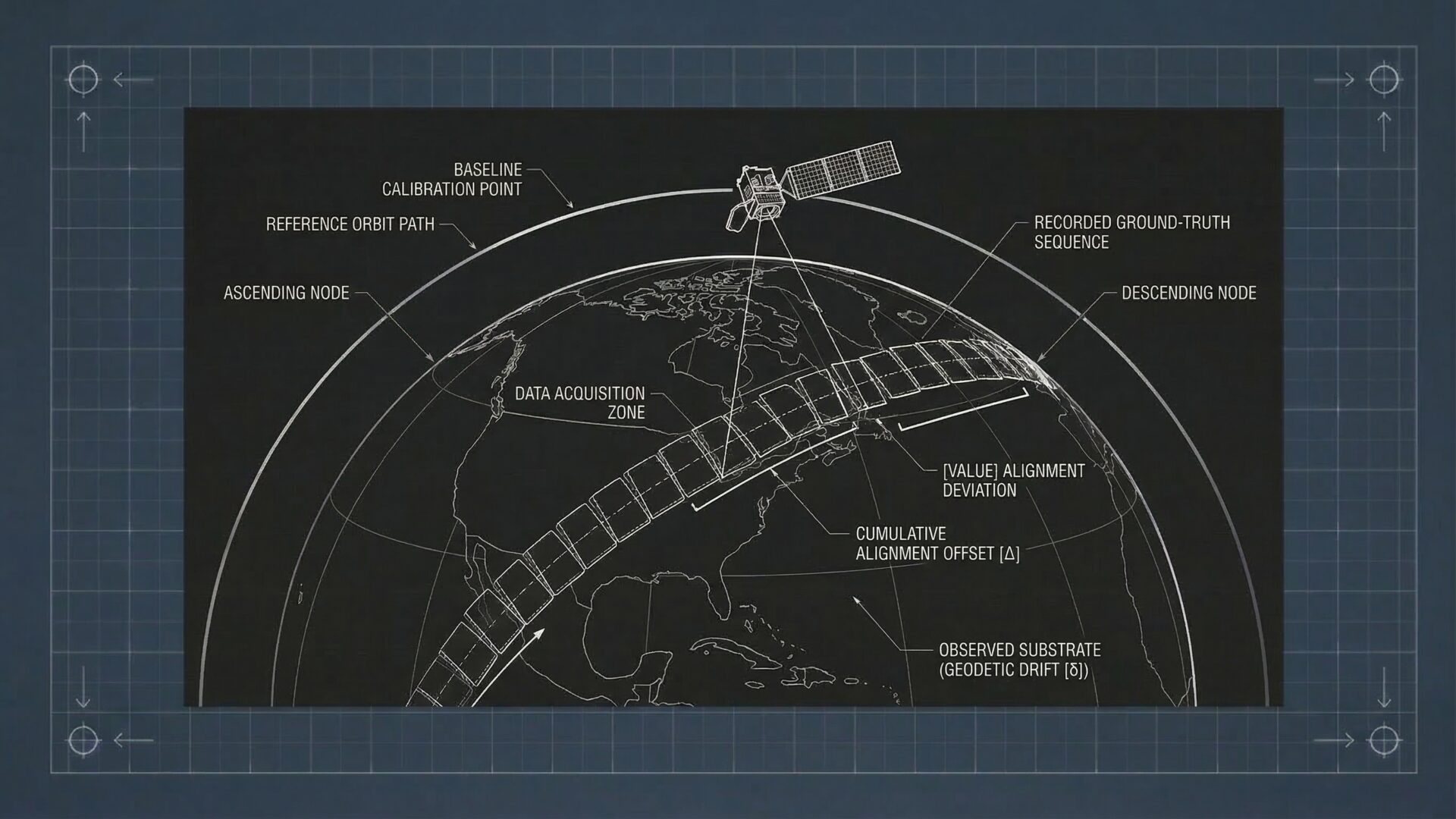

The assumption behind the question was that a satellite was a persistent asset, something like a security camera you could point at will. The actual constraint was orbital mechanics, sensor physics, atmospheric interference, and the fundamental reality that cloud cover defeats optical sensors regardless of how much the technology has advanced.

Most of those executives had flown commercially in bad weather. They understood turbulence. They understood that a shaky camera in thick clouds does not produce useful imagery. Once you made that translation the conversation improved. But you had to make it every time, with every new executive, on every new program.

The underlying governance failure was that nobody had written the contract. Nobody had documented what this data product could and could not tell you, under what conditions, with what caveats, and at what refresh rate. The data existed. The golden dataset definition did not. So every briefing started from scratch negotiating what the data actually was.

That is expensive. It is also avoidable.

Why I Went to Flight School

I hold an FAA Private Pilot certificate, airplane single engine land. I wrote about how I earned it and what it cost me in an earlier field note. The short version is this: I was working on DHS programs that depended heavily on airborne collection, and I reached a point where I knew enough to repeat the stock answers about safety and constraints but did not feel them in my bones.

When the opportunity appeared to train alongside US Marine Corps initial flight students at Dulles Aviation in Manassas, I took it. On paper that looks like a hobby. In reality it rewired how I think about any mission system that depends on anything in the sky.

After enough hours in a Cessna 172 on gusty days, hearing a crew say “we cannot fly today” or “the data quality will not justify the exposure” lands differently. You stop hearing an excuse. You hear someone quietly saying that the risk envelope is wrong, that the sky is in charge, not us.

The people closest to the physical reality of collection were the ground truth validators of what the data could honestly claim. If I could not stand next to them credibly I could not protect downstream users from unrealistic expectations. Or more dangerously, from trusting a product the collection conditions could not actually support.

That is the Mike E. principle applied inward. Put your name on enough of the stack that you know where the weak points are.

The Scale Changes. The Principle Does Not.

What connects agricultural change detection, footprint signatures on security perimeters, wildland fire fuel mapping, and satellite-derived meteorological products is not the sensor platform or the application domain. It is the same underlying reality.

The sensor always sees the signal before the human does. The question is always what consequence follows from being wrong about the answer. And that question determines what it means for a dataset to be golden rather than merely useful.

At continental scale, with dozens of member states coordinating national weather forecasting, aviation safety, and climate monitoring on the basis of shared earth observation products, the calibration and validation work that anchors satellite-derived data to physical reality is not overhead. It is the reason the data is worth anything at all.

When satellite imagery reaches the analyst, when the meteorological product reaches the forecaster, the ground truth work that made it trustworthy is invisible. But it is there. Someone walked the equivalent of those fields. Someone validated the chain. Someone has their name on the turbine.

I wrote about what that means when I visited Hoover Dam and noticed a small plaque on one of the generators: Proudly Maintained By Mike E. That image has been living in my head as a systems principle ever since. You can read the full piece in the related links below. The short version is this: a brass plaque with a human name attached to a critical piece of machinery means something fundamentally different than “Maintained by Facilities Department.” It means someone is proud enough to stake their reputation on whether this thing works.

No amount of federation saves a broken anchor point. The Hoover Dam does not become more reliable because you build more distribution infrastructure downstream. It becomes more reliable because Mike E. has his hands on it, his reputation attached to it, and a gauge that tells him whether the thing is actually working.

The same is true of any data product that people rely on when the stakes are real.

Not all data needs to be golden. What you deliberately choose to make golden should be treated as a product. Owned, maintained, and cared for. Not as exhaust that just happened to land in one place.

This field note relates to the following doctrine guides: Doctrine 20: Golden Datasets: Putting Truth In One Place Without Pretending Everything Is Perfect | Doctrine 04: Useful Interoperability Is the Goal, Not Perfect Interoperability | Flying The Picture: What A Little Cessna Taught Me About Mission Systems | Hoover Dam Lessons: Proudly Maintained By Mike E.

Last Updated on March 18, 2026